On the AI team at Loop, we are building automation to tackle the complex problem of understanding supply chain paperwork. We need to process everything from OCR-friendly PDFs to phone pictures snapped by truck drivers. For example, a single shipment document might list dozens of addresses with only some (or none!) that are labeled. Or, some documents may include scribbled dates that are hardly-legible. Even worse, we may receive invoices from both our client and a carrier which disagree on the services rendered. In all scenarios, our clients expect us to make sense of the data we receive.

We rely heavily on LLMs as a core component of our automation. Their flexibility to reason across diverse problems makes them a good-enough baseline for a variety of tasks. However, their generality is also a limitation. While LLMs excel in well-defined and tightly constrained settings, they struggle in complex domains where the rules are nuanced, the edge cases are abundant, and the context is rarely self-evident. As a result, our ability to leverage LLMs to effectively process our data relies on us being able to teach them the domain. However, the expertise needed to process our data intelligently isn’t in any training set. This knowledge is held in the minds of supply chain professionals who’ve spent years working in the field. Our goal on the AI team is to close the knowledge gap between these domain experts at Loop and the automation we deploy.

Normalization at Loop

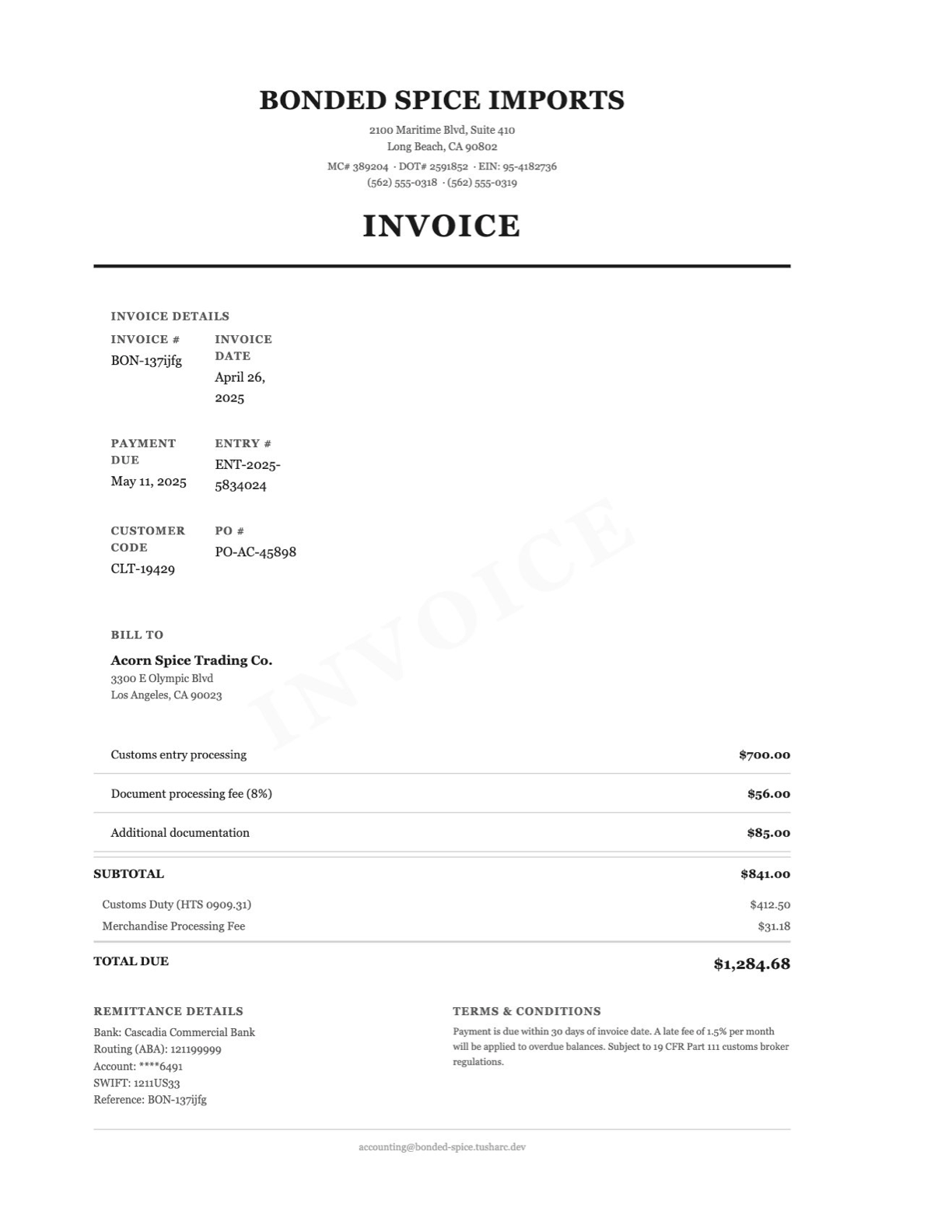

Our ingress includes all sorts of shipment-related documents including invoices, bills of lading, and delivery receipts. Let’s look at the following freight invoice as an example:

This PDF contains names, addresses, reference numbers, contact information, line items, and a listed total amount. We need our automation to extract enough information off of this PDF to identify:

- What kind of document is this (invoice, delivery receipt, bill of lading)?

- What significant information does it contain?

- What shipment is it relevant to?

To answer these questions, we perform three steps, each of which is broken down into smaller units we call atomic tasks.

1. Categorization

Our first question is simply, "What kind of document is this?"

Answering this question allows us to narrow down our expectations for what we can expect to learn from the document. For instance, we don't expect an invoice to include the date that a shipment was picked up by a trucking carrier. In the same way, we wouldn’t expect a bill of lading to include the total amount billed for the shipment. Categorizing the document allows us to determine which extraction tasks we should run on an otherwise arbitrary PDF.

We first attempt to categorize a document by generating a multimodal embedding that combines its text, layout, and visual appearance. We then compare it against embeddings of pages we've seen before, looking for the closest match. If no clear match emerges, we fall back to an LLM, using a generic prompt to guide its classification.

For the example freight invoice, the ‘Invoice’ label and ‘Invoice Details’ section make it evident that this should be categorized as an invoice.

2. Extraction

Once categorized, each document goes through a set of extraction tasks specific to its categorized type. For an invoice, that means determining the date, total amount, and specific charges (among other fields). The challenge here is that every invoice issuer has their own format. These extraction tasks normalize that variation, producing a standardized output for every diverse invoice format that we receive.

These tasks are typically handled by an LLM guided by generic base prompts that remain consistent across all invoices. To give the LLM the context it needs, we supplement these prompts with structured information already extracted by our in-house document understanding models. This information includes things like every reference number, date, and address found on the page. While LLMs are powerful general reasoners, they struggle with the kinds of layout-heavy edge cases that are routine in supply chain paperwork: addresses printed vertically down the side of a page, column headers spatially distant from their values, or reference numbers scattered across stamps and annotations. These examples are what our in-house models are built for. Their output becomes the foundation for the LLM to reason over.

For the invoice date task on the example freight invoice, the LLM reasons “in the document, under 'Invoice details', I see invoice date 4/26/2025” and submits April 26, 2025.

3. Linking

Invoices only tell half the story, though. The charges on an invoice don't stand alone. They represent some kind of service rendered, like a container moving across the ocean or a truck carrying goods to a warehouse. Our next goal is to associate the invoice with any shipments that already exist in our system, so that we can audit the shipment against an invoice. If we can’t find any matches (likely because we haven’t received the relevant shipment documents yet), then we may create a brand new shipment with placeholder information.

This step is typically handled by heuristics that rely heavily on the quality of extraction. For the example invoice, we can match it to its shipment via the reference number PO-AC-45898 that our automation extracted.

During normalization, when automation fails to produce an answer for a given task, a human steps in to provide one. Similarly, if a human catches an incorrect answer from automation, they override it with the correct one. However, relying on a human quickly becomes a bottleneck for normalization. As a result, ensuring both accuracy and high automation rates in this process is crucial for scalability. While LLM automations work well in the general case, their inability to handle the nuances and edge cases of supply chain data prevent us from maximizing these metrics at scale.

Logistics is full of edge cases: a carrier that gets consolidated into a larger one but still uses its old logo, a factoring company sending us unusual invoice formats, or someone handwriting weight information on a BOL. To address this, we’ve embedded domain experts directly in the loop—not just as a fallback, but as a feedback mechanism. This way, humans don’t need to fix mistakes from automation over and over.

Injecting Domain Context to LLM Prompts

We need a way to empower LLMs to interpret messy, unstructured data with the same intuition that a seasoned auditor would.

While each atomic task shares a base set of instructions, this isn’t adequate for dealing with the nuances that come with analyzing supply chain data. We need an extra layer to flag special extraction cases that automation comes across. We call this layer entity context rules.

Conceptually, an entity context rule is a natural language instruction tied to a target entity and task type. We inject these rules into the LLM’s prompt, emphasizing that they take precedence over other, more general instructions. These rules primarily capture unintuitive, entity-specific knowledge that an LLM likely wouldn’t infer on its own. Here are some examples of rules:

- For any line item on an invoice from carrier A, the label “Document Charge” is actually referring to a cross border processing fee

- For any shipments from carrier B, the invoice date won’t be listed, but it is the same as the shipment pickup date

- Carrier C also does business under the name X

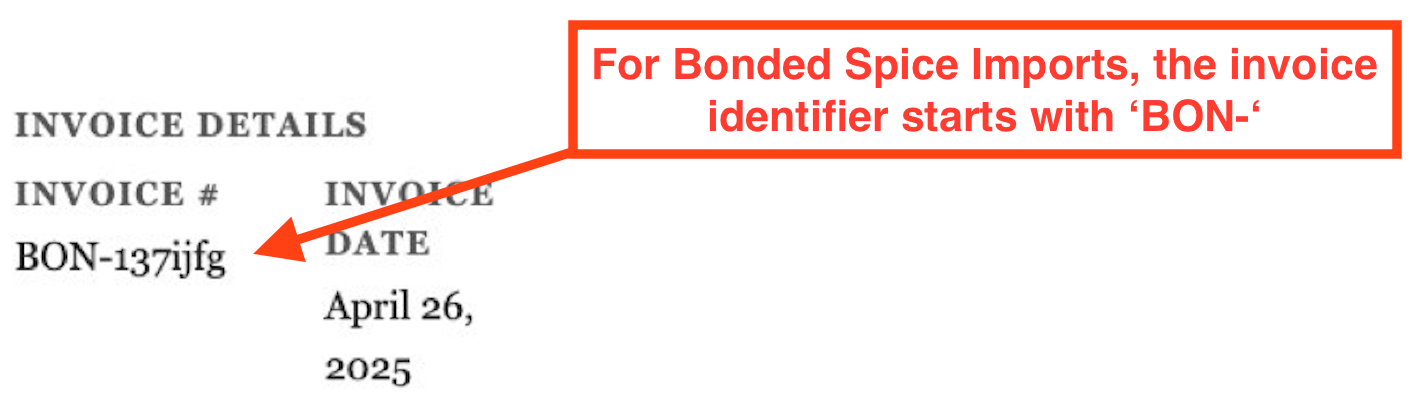

The invoice document from earlier is nicely-formatted for extraction purposes, but an example of an entity context rule for the invoice identifier task could be the following:

Since these rules are specific to an entity type and task type, we can encode knowledge at the right level of specificity without bloating LLM context. It would be wasteful and confusing to put all of these rules in every prompt. Instead, we can inject them into the prompt precisely when we need them. For example, before running the invoice identifier task, we wait until we complete the carrier determination task. This ensures the LLM sees carrier-specific context rules when deciding the invoice identifier.

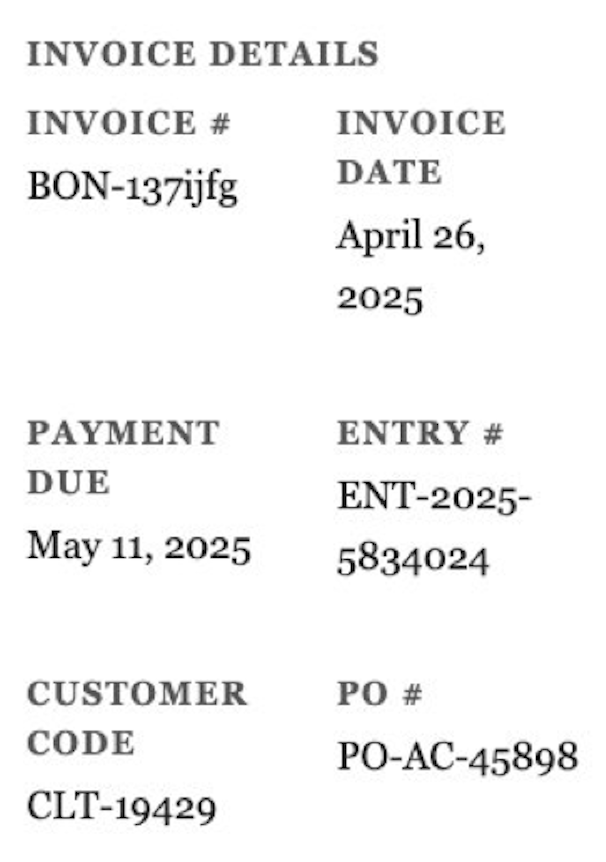

When a domain expert recognizes a need for custom guidance, they create a rule. This typically happens in one of two ways: they either proactively identify a complex extraction pattern, or they notice automation making the same mistake repeatedly. Let’s take a look at the invoice document we looked at previously. A naive LLM should have no problem extracting the invoice date (as shown below) as April 26, 2025.

But what if this invoice issuer routinely forgets to update the year on the invoice date? Now that we’re in 2026, clients would complain that we keep flagging their newly received invoices as a year overdue! This is a real issue we've run into with certain issuers. A domain expert would catch this problem and write the following rule to prevent it going forward:

On invoices from Bonded Spice Imports, the year is often incorrect in the invoice date label (but the month and day are correct). Infer the correct year by assuming that the invoice was issued in the last few months and take note of the current date.

Automation alone would never recognize that this was an issue. It takes a domain expert who has experience working with this issuer to catch it. Entity context rules are how we encode that expertise into our automation.

Automatically Discovering Domain Knowledge

The situations in which we need entity context rules aren’t always obvious. Initially, we relied on humans to notice when a rule was needed, which meant that our coverage was only as good as what someone happened to catch. We needed a way to take a step further and automatically discover critical domain knowledge. To do this, we built a system called failure analysis.

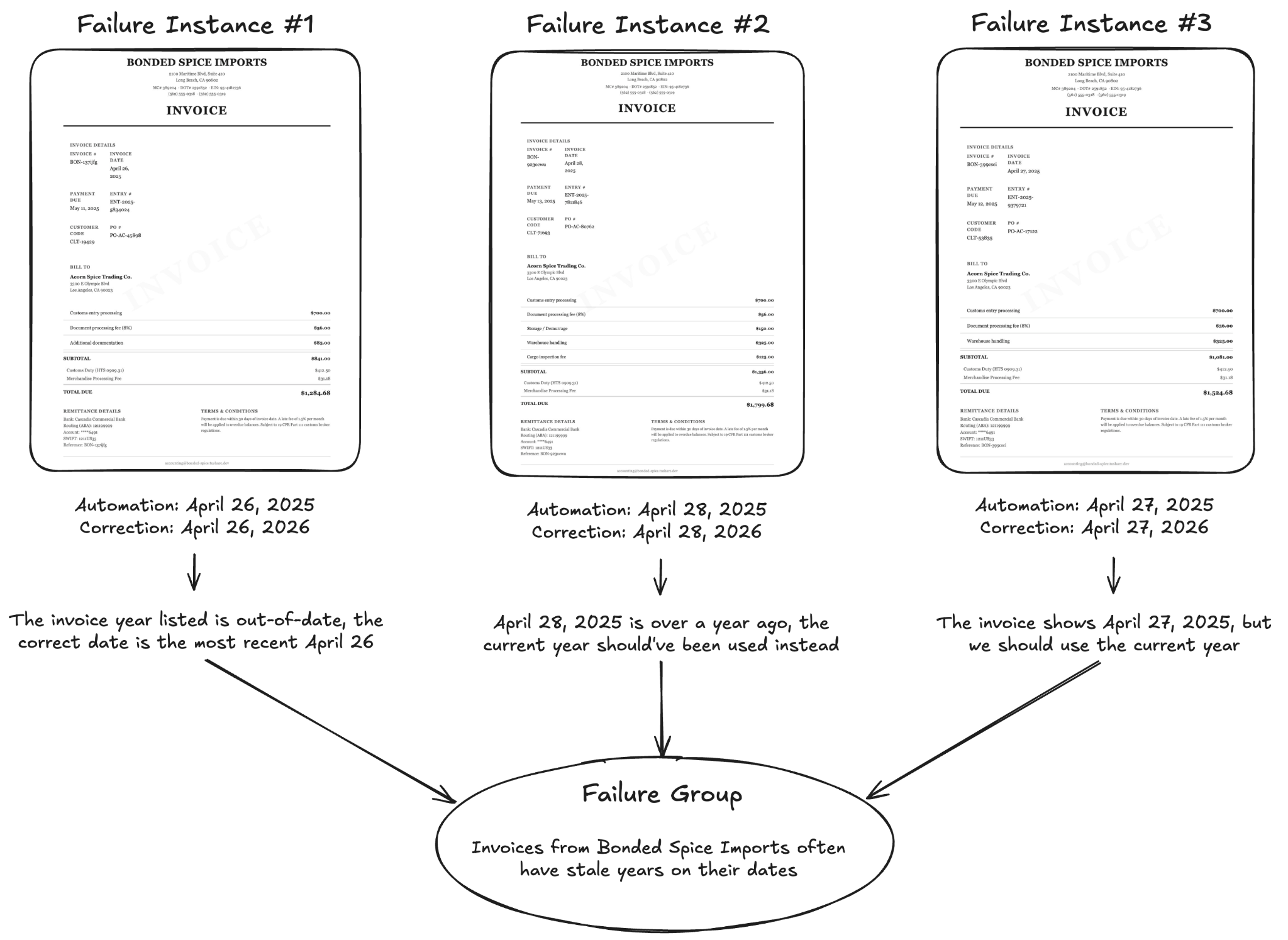

We define an automation failure in two ways: either automation intentionally leaves a task unanswered because it lacks confidence or it provides an incorrect answer that is later corrected. In either case, we're left with a record of what automation got wrong and what the correct answer should have been. We persist each of these records as failure instances. For example, let’s go back to the invoice document shown earlier. When a human came in to correct the invoice date from 2025 to 2026, we persisted that correction as a failure instance.

On its own, a single correction like this isn't particularly useful. One instance could just be random noise, like a typo in a document. The real value comes from analyzing failure instances in aggregate, where a group of similar ones points to a recurring problem, like consistently extracting the wrong date on one issuer’s invoices. We can identify groups of similar failure instances in two ways: by clustering semantic embeddings of LLM-generated failure summaries, or by grouping them heuristically along shared attributes like entities and task types. Once a group is formed, an agent evaluates whether it captures a shared failure pattern. It does this by examining automation’s reasoning across each failure instance in the group, looking for a common root cause. If it finds one, it will mark the group as valid.

The failure group validation agent also has the ability to suggest corrective actions to fix automation moving forward. One of these actions could be creating a new entity context rule to fill in a domain knowledge gap. Other actions could be making system improvements such as associating an address with a shipper, assigning a new name alias for a carrier, or updating the base prompt for a task. In the example failure group shown above for Bonded Spice Imports invoices, the agent could propose an entity context rule to address the root problem as a result.

Each valid group is then presented to a human for review. By this point, it's been vetted twice, first by the grouping mechanism and then by a verification agent. This vetting process ensures that most groups that reach a human are meaningful and actionable. The key shift here is that humans no longer need to proactively figure out how to address failures. Instead, failure analysis surfaces problems and suggests fixes that humans just need to review.

This system creates a feedback loop that allows our automation to continuously teach itself the intricacies of supply chain data with minimal manual intervention required. This property scales well as Loop continues to grow. Every new carrier, invoice format, and extraction edge case that we encounter introduces potential domain knowledge gaps that automation may not handle well out of the box. However, failure analysis ensures that those gaps are surfaced right away so that what’s unfamiliar at first quickly becomes well-handled.

Conclusion

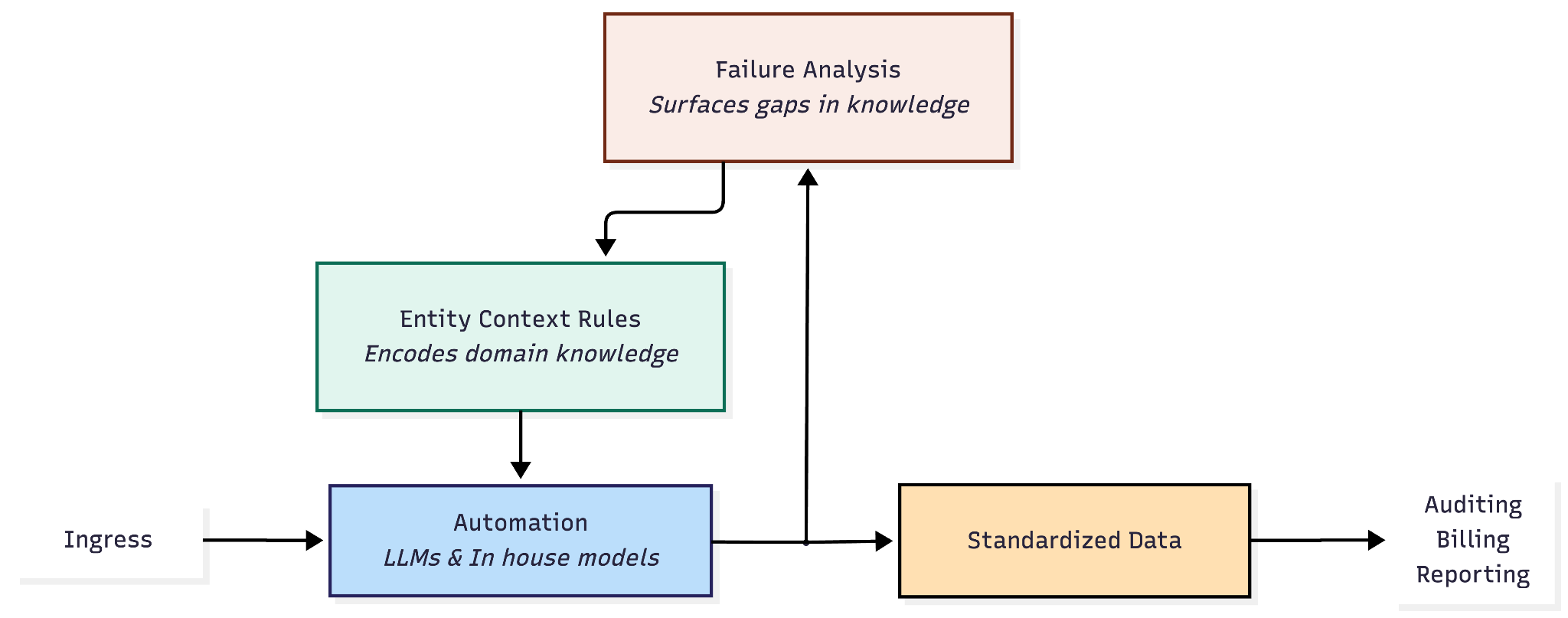

At Loop, scaling depends on infrastructure that automatically captures the nuances of supply chain data and surfaces them to our LLM automation. Entity context rules and failure analysis are two sides of that coin: one encodes domain expertise into our automation and the other ensures that we’re proactively discovering where new expertise is needed. Together, they create a feedback loop that improves automation with every edge case it encounters.

The next major step in this effort is to trust and enable the failure analysis agent to take corrective actions on its own, without needing human approval. As confidence in the system grows and our verification mechanisms mature, there’s a clear path toward letting the agent close the entire loop itself. At that point, we’ll have a system that doesn’t just automate supply chain work but autonomously develops the expertise to do it well.