Background

Loop is a logistics data platform company. We ingest invoices from thousands of carriers, normalize them, run audit checks, orchestrate payments, and surface discrepancies to our customers. The business logic is intricate and stateful – you're coordinating across external APIs, databases, S3, Snowflake, and third-party EDI networks, often for operations that take minutes to hours to complete.

Prior to Temporal, we already had well-functioning infrastructure for reactive, event-driven work: durable event consumers backed by Kafka, Amazon SQS for specific data ingress and egress paths, as well as pollers for periodic processing. Those patterns are still in place and work well for what they're designed for.

Where things got difficult was orchestration; that is, managing multi-step business processes where each step has its own failure modes, retries, and progress that needs to be tracked across server restarts. An example is something like: receive a carrier .zip archive file of PDF files → unzip the archive → split each PDF into separate files based on document type (invoice, bill of lading, delivery receipt, etc) → extract structured data from PDFs → normalize standard entities → run rate audits → queue discrepancies for review.

Doing that reliably, with good visibility into where failures occurred and easy recovery when they did, required either significant hand-rolled state machinery or a different tool entirely. Handling these through Kafka event consumers only worked, but any issues would block consumers. This caused several incidents which we lovingly described as "latency going up and to the right".

We introduced Temporal in June 2023, two years after Loop was founded. What follows is a chronicle of how a single experiment grew into the backbone of our orchestration infrastructure.

Phase 1: Initial Launch (June 2023)

The initial Temporal integration was driven by a clear question: how do we run long-running, multi-step operations reliably in a codebase that's predominantly a stateless API?

The integration needed to feel native to our existing patterns. Loop’s backend is a highly modular NestJS monolith with rigorous dependency isolation. A few key pieces made it possible for us to integrate Temporal while respecting the domain dependency hierarchy:

- @Activity() / @Activities() decorators mark any NestJS service method as a Temporal activity. Combined with NestJS's DiscoveryService, the worker auto-discovers and registers all activities at startup, so no explicit wiring is required. Existing service logic could become a Temporal activity with a few decorators.

- A thin client wrapper enforces a consistent workflow ID scheme so workflow IDs are deterministic and deduplication is automatic: ${kebabCase(workflowType)}|${taskIdentifier}.

- OpenTelemetry trace interceptors were added within the first week. Temporal activities showed up as spans in our Datadog APM traces from day one.

The first production workflows landed quickly: Parcel CSV file ingestion to Snowflake, denormalized charge ingestion, shipment normalization, and an async password reset flow. Early validation that the pattern held up across different problem shapes.

Phase 2: Use Case Expansion (July–December 2023)

With the platform proving itself, teams started reaching for Temporal for problems that had previously been awkward to solve reliably.

Parcel Ingestion Pipeline

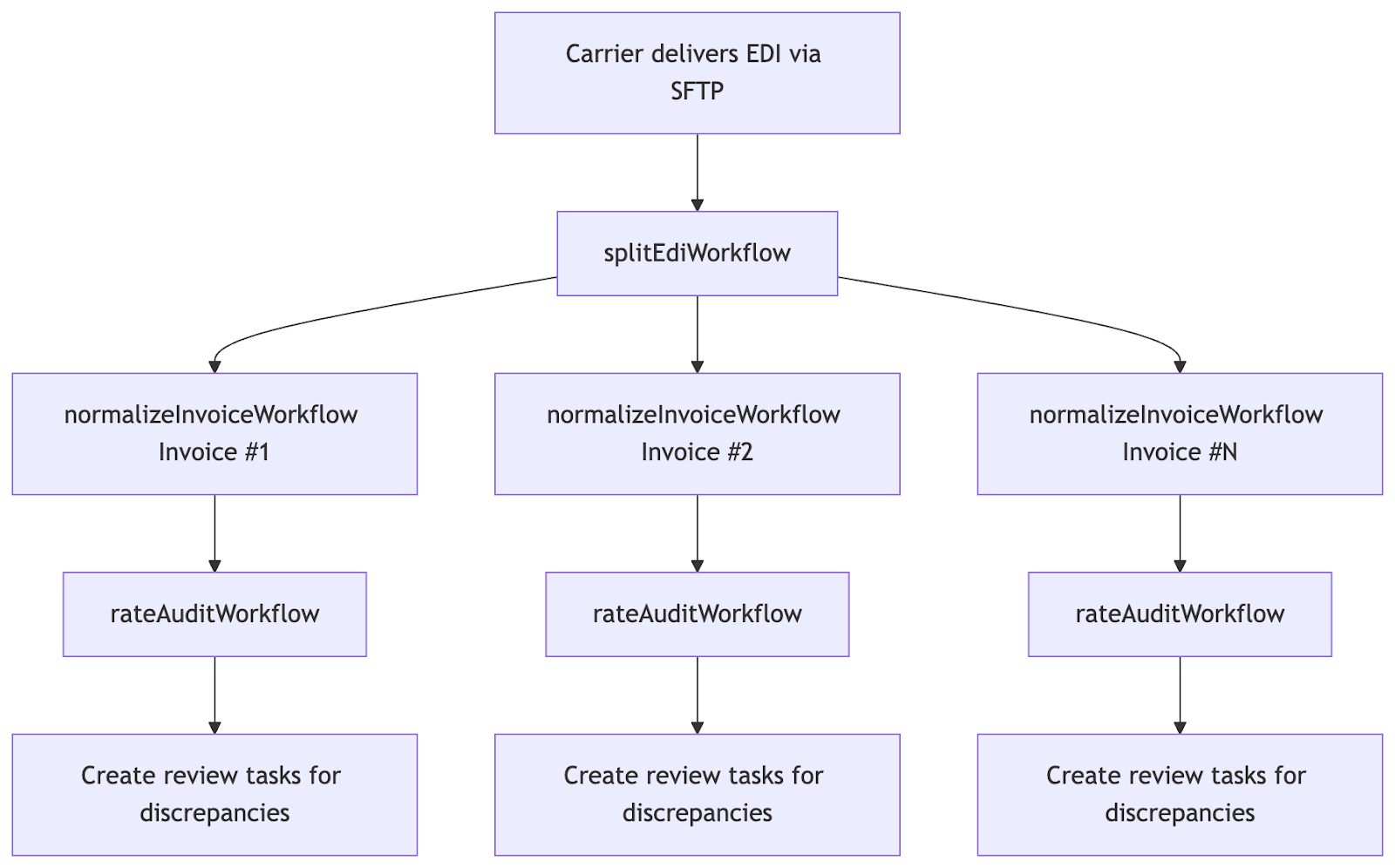

The parcel ingestion pipeline became one of the most compelling early Temporal use cases. The parcel pipeline involves receiving high-volume structured invoice files from UPS, FedEx, USPS, and others with up to hundreds of thousands of charges per invoice. The pipeline has inherent fan-out structure:

1. A carrier delivers an EDI file containing many invoices

2. Each invoice contains multiple charge groups, which in turn contain individual charges

3. Each invoice is normalized into our internal data model

4. Rate audits are run against each normalized charge group

5. Charges with discrepancies are queued for human review

Before Temporal, this was a sequential process with no good recovery story. If normalization for invoice #47 of 200 failed, you had to figure out which ones had already succeeded and re-drive the rest manually.

Temporal let us model this as a parent-child workflow hierarchy. A top-level "splitter" workflow fans out child workflows - one per invoice - each with its own retry policy and failure isolation. The parent tracks overall progress; a failing child doesn't abort the rest. By late 2023, UPS and FedEx splitting were both running in production as child workflow fan-outs.

The rate audit step added another layer of complexity. Running a rate audit involves fetching contract rates, comparing them against billed amounts, and generating findings - each potentially calling external APIs. These activities are individually idempotent and individually retryable. A transient network failure to the rate engine doesn't restart the entire ingestion from scratch; it retries just the failed activity.

Phase 3: Consumer Migration (2024)

The Consumer Migration

As Temporal matured, we started reaching for it for a class of problem where it offers a meaningful advantage over a simple event consumer (or series of event consumers): orchestration across multiple steps or services, where a mid-process failure should be resumable rather than requiring a full restart.

What Moved

Over the course of 2024 and into 2025, a steady stream of multi-step processes moved to Temporal workflows - each because the work involved coordinating across multiple services or because durable progress was valuable:

- Payable approval automation (May 2024) - rules evaluation across several services

- Payment Service Provider network onboarding logic (June 2024) - cross-domain reconciliation with retries

- Artifact extraction automation (September 2024) - multi-stage document processing

- Job mode determination (February 2025) - multi-signal decision process

- Dozens more throughout 2024-2025

The selection criterion was consistent: if a process requires any substantial amount of resources, runs long enough that a server restart mid-run is realistic, or produces intermediate state worth preserving, it belongs in a workflow.

Phase 4: retryOnSignal and the Idempotent Worker Pattern (2025)

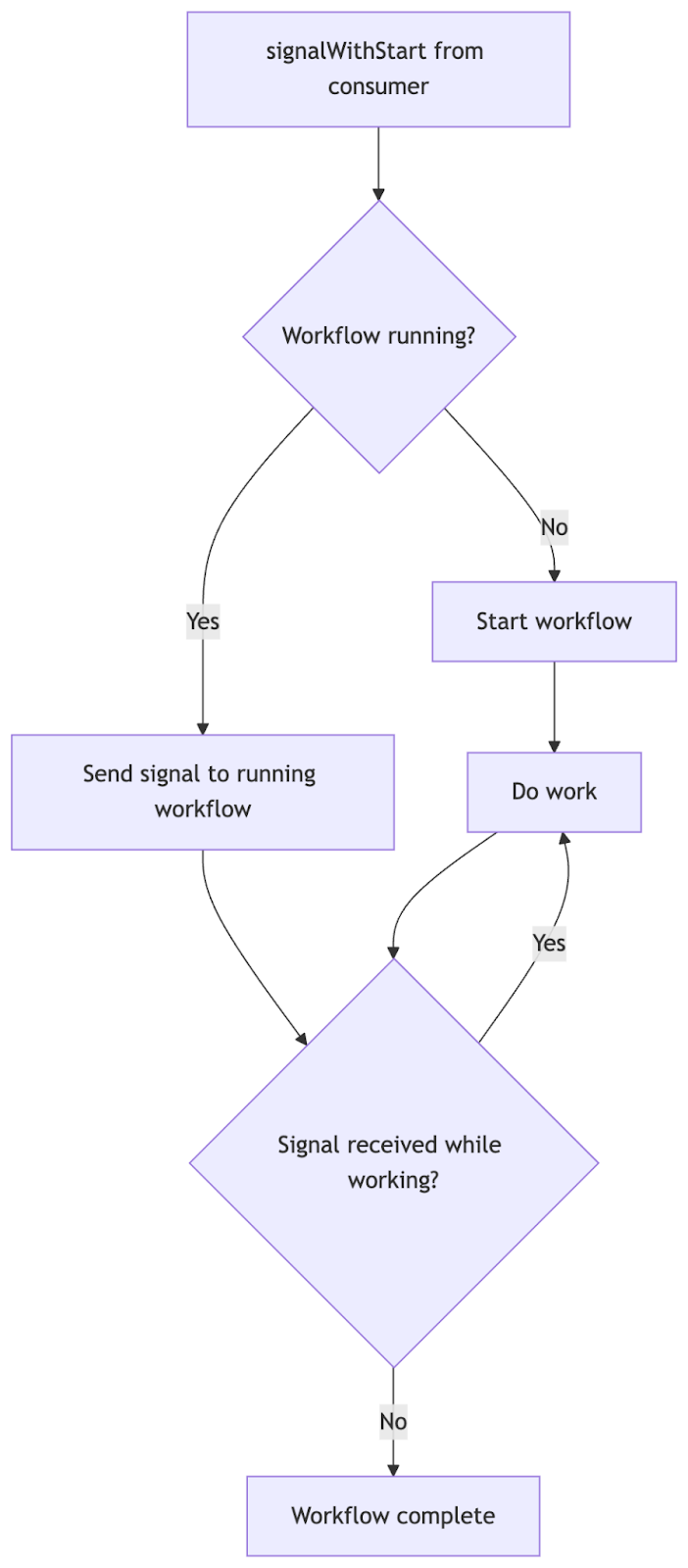

As more consumers migrated to Temporal, we encountered a new problem: event fan-in.

Consider a workflow that recomputes the rate audit status for a shipment job. Events that should trigger a recomputation arrive from multiple sources: invoice revision events, task completion events, payment events. Under the old event-consumer-only model, each event was processed independently. Under Temporal, we want a single running workflow per shipment job - but we also need to ensure that if a new event arrives while the workflow is running, the workflow re-runs after completion rather than dropping the event.

The solution is signalWithStart + retryOnSignal:

A workflow using this pattern looks like:

export async function updateRateAuditStatusWorkflow({

shipmentJobId,

}) {

await retryOnSignal(

[{ shipmentJobId }],

updateRateAuditStatusSignal,

async () => {

await updateRateAuditStatus({ shipmentJobId });

},

);

}

The retryOnSignal utility (formalized in March 2025) wraps the core work in a loop:

- If no workflow is running for this entity, signalWithStart creates one

- If a workflow is already running, signalWithStart sends a signal to it

- The retryOnSignal loop inside the workflow checks for pending signals after each work unit

- If a signal arrived while working, the workflow loops and re-runs the work

This pattern lets high-frequency event streams converge onto a single, deduplicated workflow execution per entity - without losing any events.

Phase 5: The Self-Hosted Migration (February–April 2025)

Until early 2025, all of our Temporal workflows ran on Temporal Cloud - Temporal's managed SaaS offering. Temporal Cloud is operationally convenient (no infra to manage), but at scale it becomes expensive. By late 2024 we were executing millions of activities per week and growing fast. With 30+ task queues, hundreds of workflow types, and throughput only heading in one direction, we decided to run our own.

Standing Up Temporal Server

In February 2025, we introduced support for a self-hosted run mode alongside the existing Temporal Cloud connection. The infrastructure team provisioned a self-hosted Temporal cluster running in AWS, with an AWS RDS PostgreSQL persistence backend.

Migration from one temporal server to another is relatively easy:

- In our backend, point start/signalWithStart requests to the new server

- Keep running workers serving both the old and new servers

- Wait for the old server to drain

Our first self-hosted Temporal Server utilizing AWS RDS Postgres alongside the publicly available Temporal Server Helm Chart (in AWS Elastic Kubernetes Service) immediately produced great savings - cost per billable action was reduced by over 60%, vs Temporal Cloud, which has saved us hundreds of thousands in costs as we've continued to scale up in both volume and functionality (now utilizing >1 billion billable actions per month).

We did a similar migration again in early 2026, from one self-hosted Temporal Server to another, to realize greater cost savings by utilizing ScyllaDB (Cassandra-like) instead of RDS Postgres. Look out for another blog post going into detail on this process!

Phase 6: Observability as a First-Class Concern (Late 2024)

As Temporal became mission-critical infrastructure, the team invested heavily in observability tooling.

FailedTemporalWorkflow

In November 2024, we stood up a FailedTemporalWorkflow entity - a database table tracking every workflow execution that reached a terminal failure state. In this system:

1. An interceptor captures temporal workflow failures

2. Failures are persisted to a database table

3. An internal API exposes a failure summary - aggregated by workflow type, owner team, task queue

4. Engineers can trigger a "redrive" from the UI - re-running a failed workflow with its original arguments

This moved "check if workflows are failing" from a tedious Temporal UI search to a structured internal product experience. Teams can clean up their dashboards after investigating failures without touching the Temporal UI directly.

A later addition also added Timed Out workflow tracking (which cannot be captured by interceptors) by periodically polling the Temporal Server.

Phase 7: Temporal for AI Agents (2025–2026)

The most recent and arguably most interesting evolution: using Temporal as the execution engine for AI agents. The mature developer experience surrounding Temporal at Loop was a big leg up for the AI team, and made setting up long-running agents in our system a breeze.

The Problem

AI agent loops have several properties that make them hard to run as simple HTTP request handlers:

- They're long-running - an agent researching a freight rate anomaly might run for minutes, making multiple LLM API calls

- They're stateful - the agent needs to track conversation history, tool call results, and progress

- They interact - users might send follow-up messages mid-run, or request cancellation

- They need durability - an LLM API timeout shouldn't lose all the agent's progress

Temporal checks every box.

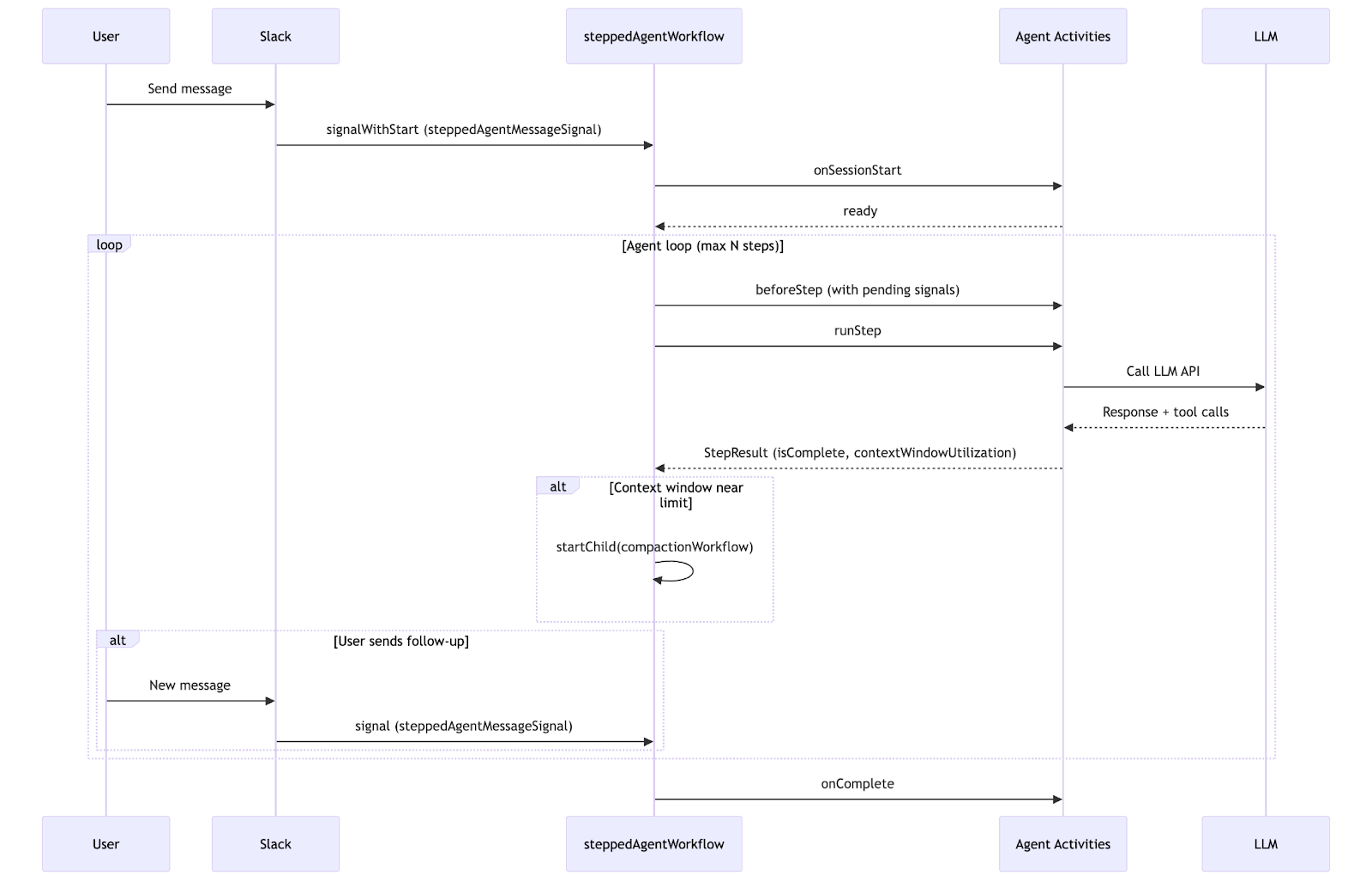

The Evolution: Per-Agent → Generic Stepped Workflow

September 2025: The LLM chat completion pipeline moved to Temporal. Every LLM call became a Temporal activity. If the LLM API returned a 429 or timed out, Temporal's retry policy handled the backoff automatically.

September 2025: Our internal Slack bot got its full agent loop on Temporal. A slackBotWorkflow runs for the duration of a conversation, receiving new messages via Temporal signals and calling LLM activities to generate responses.

October 2025: The "stepped workflow" pattern was extracted from the Slack bot implementation and applied to the rules engine rule author agent. The pattern: instead of one long-running activity that runs the entire agent loop, each "step" (one LLM call + tool execution cycle) is its own activity. This gives Temporal visibility into each step's input/output, enables per-step heartbeating, and allows the workflow to checkpoint progress between steps.

February 2026: A single generic steppedAgentWorkflow replaced three separate per-agent workflow implementations:

Each agent implements a SteppedAgentWorkflowDelegate interface with lifecycle hooks: onSessionStart, beforeStep, runStep, onComplete, onError, onCancelled. A NestJS DiscoveryService-based registry auto-discovers all delegates at startup. The workflow resolves the correct delegate by its identifier string at runtime.

The workflow structure:

Notable details:

- LLM context window compaction: if stepResult.contextWindowUtilization exceeds 80%, the workflow fires a child llmChatCompactionWorkflow to summarize earlier conversation turns - preventing context overflow without interrupting the agent

- Subagent messaging: Temporal's parent-child workflow relationships and support for workflow signalling allowed us to trivially stand up subagent workflows, and support signalling with context between these workflows

- Graceful cancellation: a cancelSteppedAgentSignal allows mid-run cancellation; the workflow calls onCancelled before exiting

- Optional hook detection: the workflow uses prototype comparison to detect whether a delegate has overridden optional hooks (beforeStep, onCancelled), skipping the activity round-trip when they're not implemented

What the Numbers Look Like

Three years of Temporal adoption at Loop:

Temporal's orchestration capabilities, plus our investment in devex, infrastructure, and error recovery have allowed us to apply it extensively throughout the codebase. Without this, we fundamentally would not be able to support both the breadth (# of documents) or depth (level of detail) of processing we currently perform for our clients.

What's Next

The stepped agent pattern is still young. We're exploring:

- Multi-agent coordination - workflows that orchestrate multiple agent delegates in sequence or parallel

- Long-running human-in-the-loop workflows - agents that pause execution waiting for a human approval signal, then resume

The debouncer service is a template for future platform components that need to sit in front of Temporal - a sidecar layer that provides flow control, buffering, or protocol adaptation without coupling to the TypeScript application.

Temporal has become infrastructure in the same way Postgres and Redis are infrastructure. You don't think about whether to use it - you think about which task queue, which retry policy, and whether your activity needs a heartbeat.

---

*Thanks to Branden, Sean, Max, Tushar, Evan, and everyone who contributed to the Loop Temporal platform over the past three years.*