I've been at Loop for four months. In that time, I've merged around 170 pull requests across frontend and backend, and gone from onboarding to owning a production system powered by LLMs.

I joined Loop because I wanted to own problems, not just write code for someone else's solution. I wanted to join a fast-moving team where the gap between having an idea and seeing it in production would be small. What I didn't fully expect was how much AI would reshape what "the work" even means.

Owning the outcome

I gravitated toward early-stage startups in university because I wanted to build things from scratch for real users. Loop has been the fullest expression of that so far. I deployed my first pull request to production on day one and shipped the frontend for a critical payment workflow within my first two weeks.

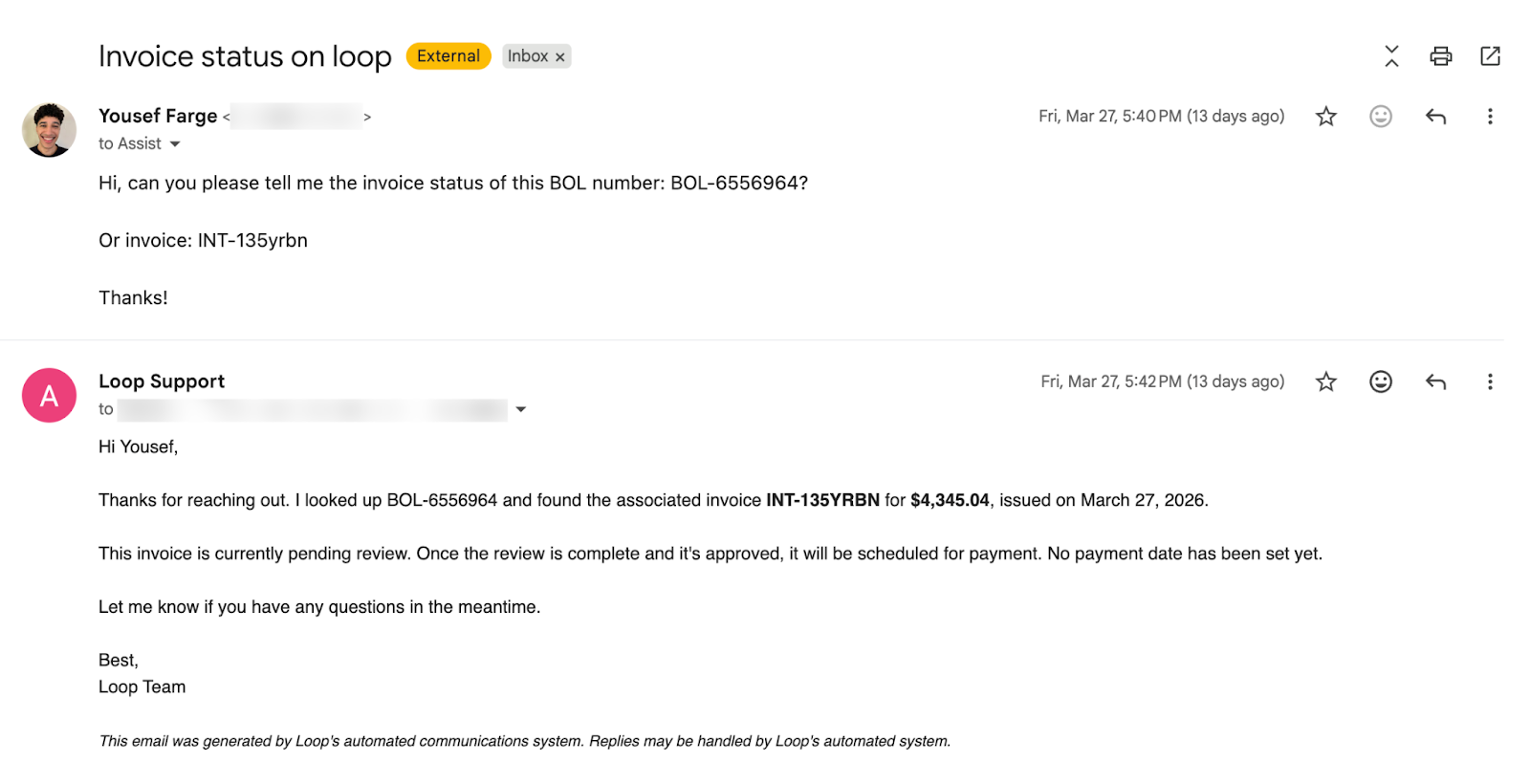

Over four months, the scope kept growing. I built internal tooling that gives our operations team direct control over payment workflows that previously required engineering intervention. I created client-facing views that surface payment status in real time. And I integrated a carrier-facing AI agent that reads inbound support emails, pulls the relevant invoices, and automatically responds to inquiries.

Taking an abstract problem, breaking it down into tractable parts, and iterating until it meets the customer need – that end-to-end ownership is what motivated me to join Loop, and what I saw mirrored in my colleagues from day one.

The people

My first week at Loop coincided with an offsite visit from engineers from our San Francisco office. I’d barely gotten settled in before they arrived and with them a whole new round of introductions.

However, this early exposure to the wider team offered a clear window into the company culture. A moment I often reflect on happened during onboarding when I got stuck and called an engineer over for help. Soon, a semi-circle of five engineers had gathered, all intently focused on my screen.

This memory perfectly encapsulates the Loop spirit. Regardless of what anyone was doing, they immediately dropped it to ensure the new team member got unblocked.

And that collaborative attitude has not diminished. A couple of months in, I needed to build on top of newly released infrastructure from the AI platform team based in San Francisco. Within hours, I was on a call with the engineers who built it, walking through my design. This willingness to help is universal because everyone knows the favor will be returned, which leads to rapid progress. As Tushar commented, "we are implementing these things faster than I can come up with next steps."

AI at every layer

AI is woven into how we work at Loop in a way that's hard to convey until you've lived it.

When I started, I was new to the domain, the codebase, and the tooling. AI tools like Claude Code shortened the ramp-up in a way I wasn't expecting. I could explore an unfamiliar part of the system, ask questions about how things fit together, and get oriented in minutes instead of hours.

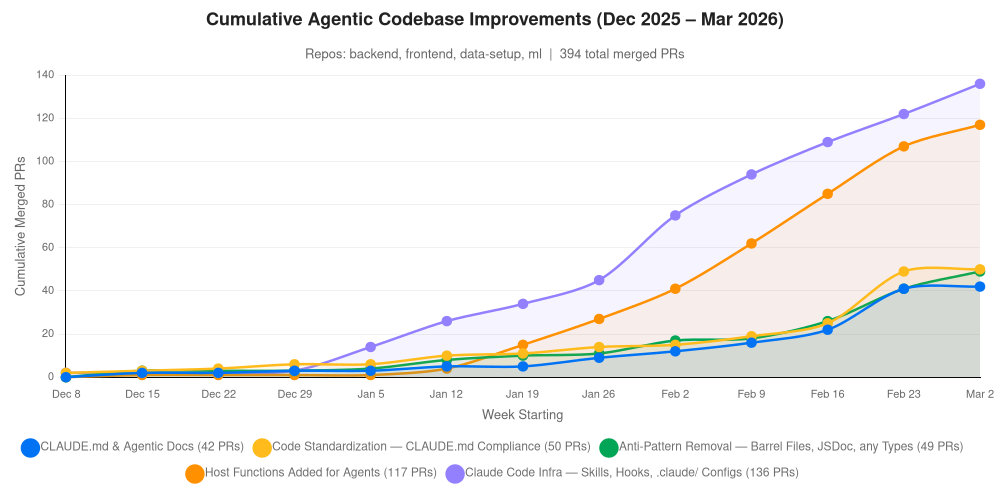

I wasn't the only one leaning on these tools. Towards the end of Q4 2025, a new generation of models landed, and overnight the ratio flipped across the whole organization. What had been maybe 80% manually written code became the inverse. Nobody mandated this, and there was no top-down push. Engineers arrived at the same conclusion independently: this is the most efficient way to get the work done.

This rapid shift has led to a great push to further standardize the codebase and make it even friendlier to agentic contributions. Technically, this includes standardizing patterns across the codebase, improving the CLAUDE.md documentation, and developing new AI skills for common tasks.

But AI at Loop isn't just a development tool – it's a building block inside the product itself. The support email platform uses an LLM agent that can read email threads, look up invoices, parse file attachments, and answer basic inquiries. The first time I got it working on a real carrier email and watched it pull the right invoices, read an aging report, and compose a correct reply, my teammate and I just looked at each other. "Computers were not a mistake," he said.

You're using AI to build the product, and embedding AI into the product. Every improvement to how we develop feeds into what we ship, and what we ship teaches us how to develop better.

That convergence is what makes this feel like the right place to be building right now.

What’s next?

I’m 120 days in and the support email platform is live and handling real carrier traffic. The AI tooling we’ve built is becoming part of how the whole team operates. That’s not where this ends; we have plans to extend this agent beyond read-only queries and reasoning, giving it the ability to resolve customer problems: resolving disputes, creating claims, end-to-end without human intervention. I came to Loop looking for ownership and speed, and four months in, the problems are only getting more interesting.